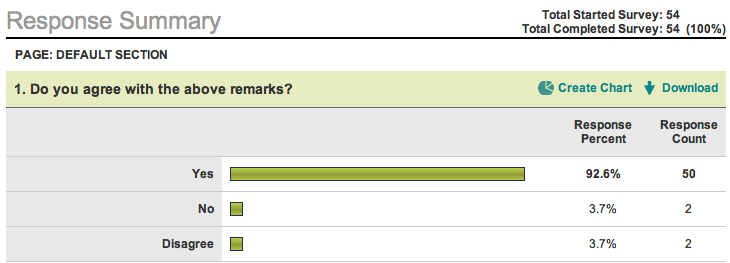

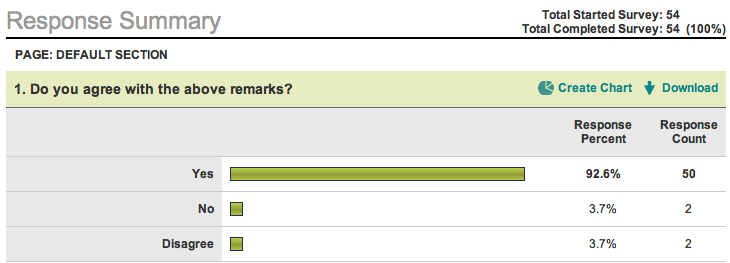

Few week's ago, i did a survey and following are the details! My special thanks to all Contributors!

Quantification of Scientific Researchers!

I believe that it is not correct to

rate or rank 'all' computer scientists on the basis of quantity of

their research papers or impact factor of published journal papers or

various other indices (example: h-index, g-index etc). Until now,

we dont have a reliable bibliographic measure for comparing the value

of the work done by scientists. Bibliographic measures

appropriate in one field (example: theoretical computer science) are

inappropriate in another field (example: parallel computing). Someone

working in the parallel computing area or human computer interaction or

computer security has different visibility (also possibility for

citations), because it depends on the number of people working in the

same field. Therefore, it is bizarre that one’s status could be

determined by mere numbers, which could be also utterly misleading and

damaging. Perhaps the only real criterion for an individual’s

scholarship is the real quality of work and not mere figures.

Ajith Abraham

June 03, 2010

Survey link: http://www.surveymonkey.com/s/DKD6NP7

Copied below are the summary of responses and some comments from over 50 Research Scientists.

Comments from Some Researchers

1. Life is hard :-))

2. It depends on contributions of the research.

3. I think every scholar may work in his own way. Jusy be happy when

you do research. Don't care about the rank too much.

4. You are totally correct in your assertion. The present system of

depending on number of citation to rank researcher is too misleading.

For example we have heard situation in which a senior researcher make

it compulsory for all his subject to cite his work (either relevant or

not) in all papers the subject published or co-published. You can

imagine this scenario!!! Getting cited when in reality the work has

nothing to do with the ongoing work!!! I do agree with you fully, but

what proposal do you have as per the better way to do this? Also i hope

you can also look into the issue of pear review problems....where some

reviewers delibrately condemn a paper based on personal reason without

(may be the paper result poses a treat to his earlier research or

ongoing research) recourse to the content of the paper. Thanks and

regards.

5. Quality of work is also very subjective. If the work is never

cited or only self-cited it is indicative of something with regard to

the quality of work regardless of the field.

6. But I feel that the standard of the journal /conference in which the

paper is published is indeed a criteria. Also published reviews by

known experts in the field can be considered as a

criteria.

7. Whenever there is judgement, I believe that for this survey to

allow for uncertain opinion such as:

- Yes but not in all cases

- Totally disagree

8. It is surely true that it depends on the domain a researcher works

in. Hwoever, once compares oneself with other researchers in the same

field, where these metics are comparable. It is also or at least should

be used for career goals only within the same field.

9. I agree and more I am among who want to Stop the Numbers Game :

Prof. Dr. David Parnas (a pioneer in Software Engineering) has joined

the group of scientists which openly criticize the

number-of-publications-based approach towards ranking academic

production. On his November 2007 paper Stop the Numbers Game, he

elaborates on several reasons on why the current number-based academic

evaluation system used in many fields by universities all over the

world (be it either oriented to the amount of publications or the

amount of quotations each of those get) is flawed and, instead of

generating more advance of the sciences, it leads to knowledge

stagnation.

10. In order to understand the issue, let's consider extremes: One

paper by great scholars like A. Einstein, N. Koblitz, Diffie-Hellman

etc. worth hundreds of ordinary papers. And since there is no known way

how to assign "weights" to papers, there is no way to measure quality

of a scientist.

It is slightly different for journals. When a paper is submitted,

unless it is really poorly written, it is accepted on the basis of

opinion of the Editor plus two-three randomly assigned reviwers. The

latter themselves are busy scholars and try to minimize their

involvement. For them it is much easier to reject paper, if they do not

understand it "on fly", than to accept it. Therefore, potentially

innovative discoveries very often are rejected. Yet, well-written and

understandable but mediocre paper has higher chance to reach readers.

Here are we coming to the most important issue: maybe it is the time to

add one more component to the process of screening. Namely, to add the

readers, i.e., the scientific libraries.

What I am suggesting, I had in my mind for the last 35-40 years, but

never published anywhere. Let's consider well-established journals, not

journals that publish everything.

In portfolio of well-established reputable journals there are more

papers than the Journal can publish, therefore the reviewing process is

longer, the rejection is higher (they must reject to avoid infinite

average delays-see basics of queuing theory) and the waiting period for

publication is longer. Yet, the Editor-in-Chief can and should send a

list of papers waiting to be published to the scientific libraries and

ask their response: to list their priorities. Hence, the paper with

larger combined priorities will be published first. Btw, this is also a

good marketing strategy for the Editor-in-Chief, who wants that

libraries will subscribe to her/his Journal.

Maybe you should publish my ideas in your jornals. Of course, I will

edit the text. Just let me know.

11. If you say that Albert Einstein had only 5 papers when I have 21,

that does not make you great - as simple as that. The very attempt to

quantify is the symptom of failure. A medical professional has no

reason to appreciate Einstein as much as a Physicist and may give a

very poor rating for him. What is important is to promote the attempts

for knowledge, discourage plagiarism and unhealthy competitions for

citations and have respect for one and all. Each one of us is what we

are because of what others have contributed - even my maid has a role

in my success. Do not underestimate anyone and cease to say Thank You!

Back